- Business Challenge:

A German industrial manufacturing enterprise aimed to productionize machine learning models for predictive maintenance across IoT-enabled equipment. While initial models performed well in isolated environments, the organization faced significant challenges when scaling them into a reliable, production-grade system.

Key challenges included:

- High-throughput, low-latency ingestion of time-series sensor data generated by thousands of industrial assets

- Inference latency bottlenecks caused by non-optimized batch processing and lack of real-time scoring capabilities

- Absence of continuous model observability, including drift detection, performance degradation, and data quality monitoring

Operational complexity in managing distributed ML infrastructure, spanning data ingestion, feature processing, model training, deployment, and lifecycle governance

As a result, the organization experienced delayed failure detection, increased unplanned downtime, and limited confidence in model-driven maintenance decisions.

- Solution Provided by CloudZen Innovations:

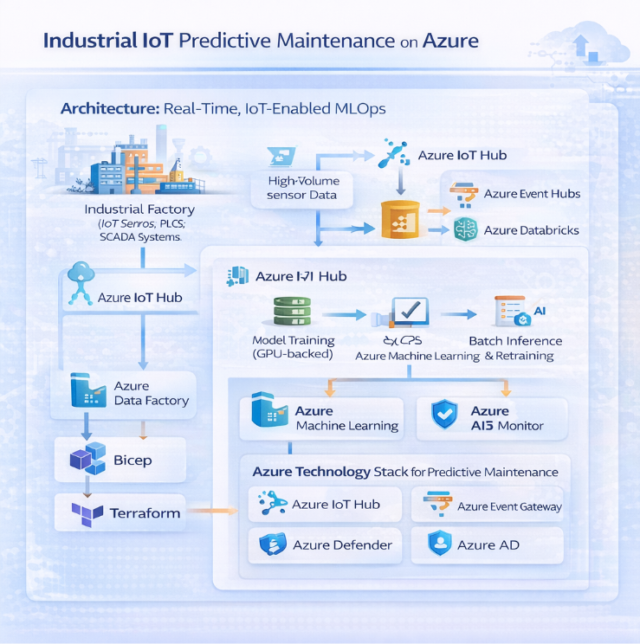

CloudZen implemented a real-time, IoT-enabled MLOps platform on Microsoft Azure, designed to ingest high-frequency sensor data, perform low-latency inference, and continuously adapt models based on evolving equipment behavior. The platform supports both real-time and batch ML workloads while maintaining full lifecycle governance and observability.

- Streaming Ingestion & Feature Engineering Pipelines

- High-velocity IoT telemetry is ingested using Azure IoT Hub and Azure Event Hubs, supporting millions of events per second.

- Stream processing and feature extraction are performed using Azure Databricks (Structured Streaming), enabling real-time aggregation, windowing, and anomaly feature generation.

- Curated features are persisted in Azure Data Lake Storage Gen2, serving as a unified feature store for both training and inference.

- Schema evolution and data quality checks are enforced to handle heterogeneous sensor payloads across multiple asset types.

- Real-Time and Batch Inference Architecture

- Real-time inference is deployed using Azure Machine Learning managed online endpoints, optimized for low-latency scoring of streaming sensor data.

- Batch inference workloads are executed via Azure ML batch endpoints to analyze historical telemetry for degradation trends and long-term failure prediction.

- Models are containerized and versioned, enabling consistent execution across development, staging, and production environments.

- Autoscaling policies dynamically adjust compute resources based on event throughput and inference demand.

- Automated RetrainingTriggered by Sensor & Data Drift

- Data drift and concept drift detection is continuously monitored using Azure ML data drift capabilities and custom statistical checks.

- Retraining pipelines are automatically triggered when drift thresholds are breached or when new labeled maintenance data becomes available.

- End-to-end retraining workflows are orchestrated using Azure ML pipelines, covering data preparation, model training, validation, and registration.

- Model promotion is governed through CI/CD pipelines with approval gates to ensure safe and controlled rollout into production.

- Centralized Observability & Operational Governance

- Azure Monitor and Log Analytics provide centralized visibility into infrastructure health, pipeline execution, and endpoint performance.

- Model-level metrics such as inference latency, prediction confidence, error rates, and throughput are continuously tracked.

- Operational dashboards enable correlation between model predictions and actual maintenance outcomes, improving trust and decision-making.

- End-to-end auditability is maintained across data, models, and deployments, supporting compliance and operational transparency.

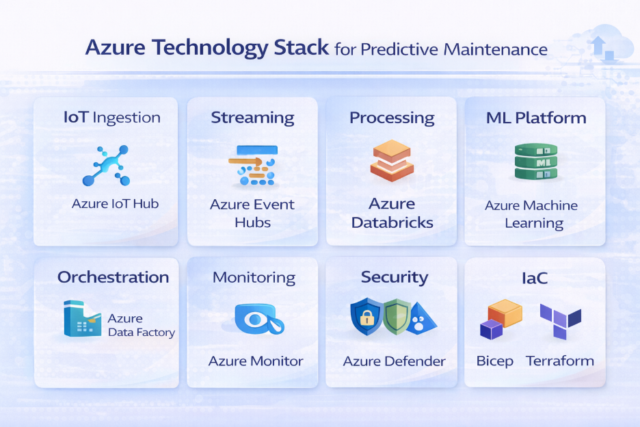

- Technology Stack:

This solution leverages a fully Azure-native technology stack to support real-time IoT data ingestion, scalable machine learning workflows, and secure production deployments. Azure IoT and streaming services handle high-velocity sensor data, while Azure Databricks and Azure Machine Learning enable feature engineering, model training, and inference at scale. CI/CD automation, centralized monitoring, and enterprise-grade security services ensure reliability, governance, and operational efficiency across the entire MLOps lifecycle.

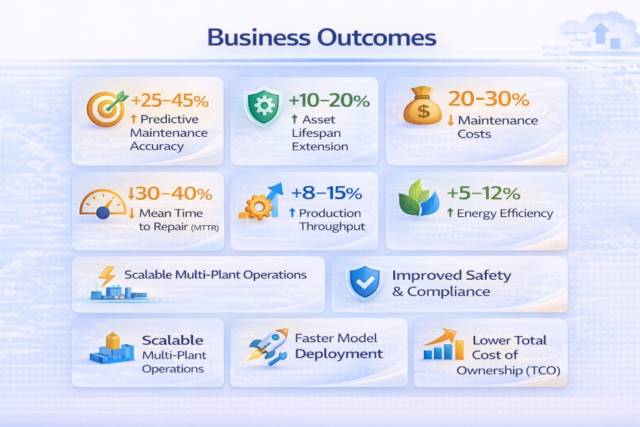

- Business outcome :

By implementing a real-time, Azure-based IoT MLOps platform, the organization transitioned from reactive to predictive maintenance. The solution enabled earlier detection of equipment anomalies, reduced unplanned downtime, and improved asset utilization. Continuous model monitoring and automated retraining ensured sustained prediction accuracy, while scalable cloud infrastructure lowered operational costs and supported expansion across multiple plants.